Location: Boston, MA, USA

Skills:

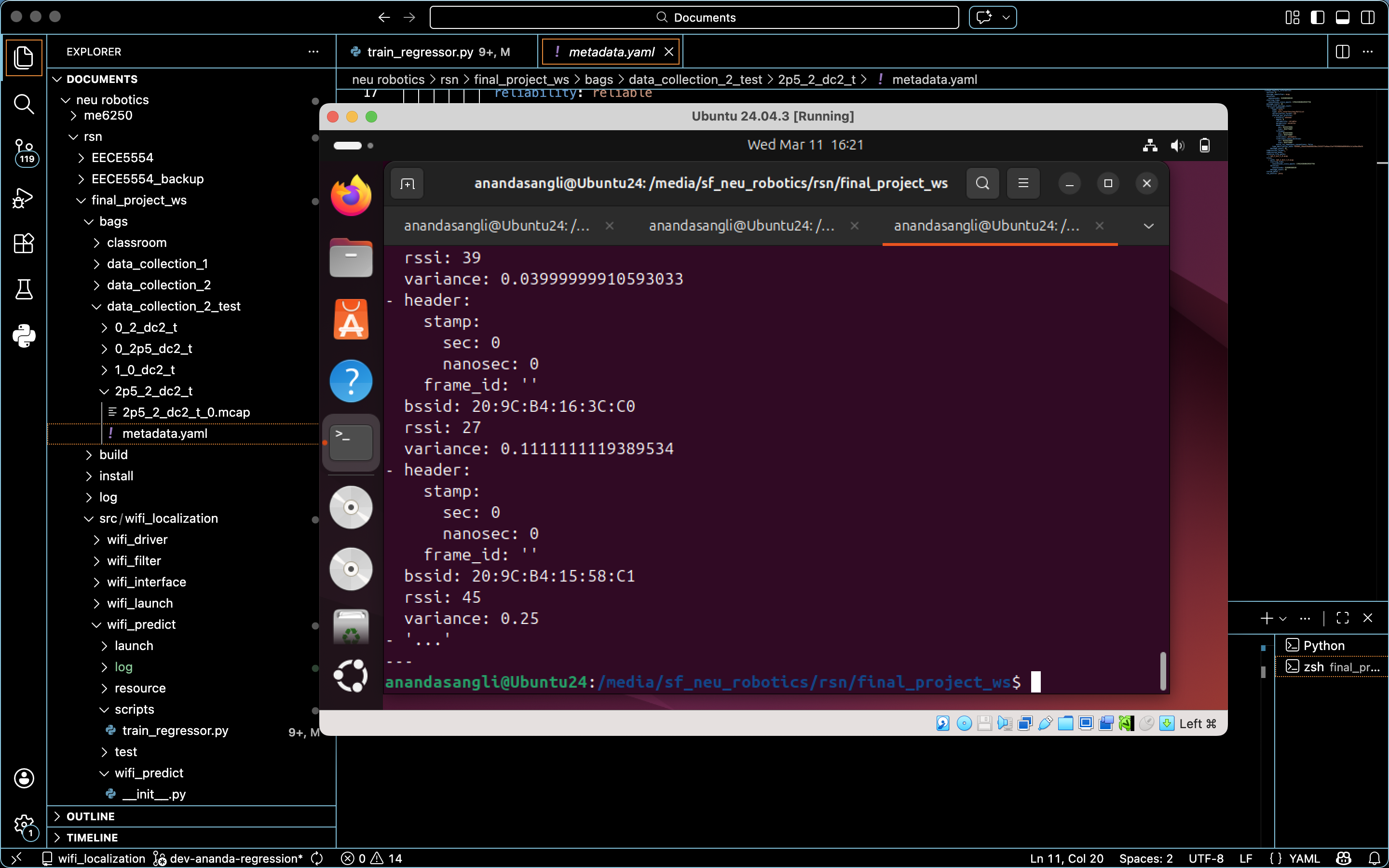

For the final project in the Robotics Sensing & Navigation (EECE 5554) course at Northeastern University, I worked with two peers to develop a Wi-Fi localization system using RSSI/BSSID Pair Training.

I worked on the development, implementation, and evaluation of learning-based localization methods to estimate planar position from WiFi signal data. This involved,

- Designing regression models that take BSSID-indexed RSSI measurements as inputs and output estimated (x, y) coordinates

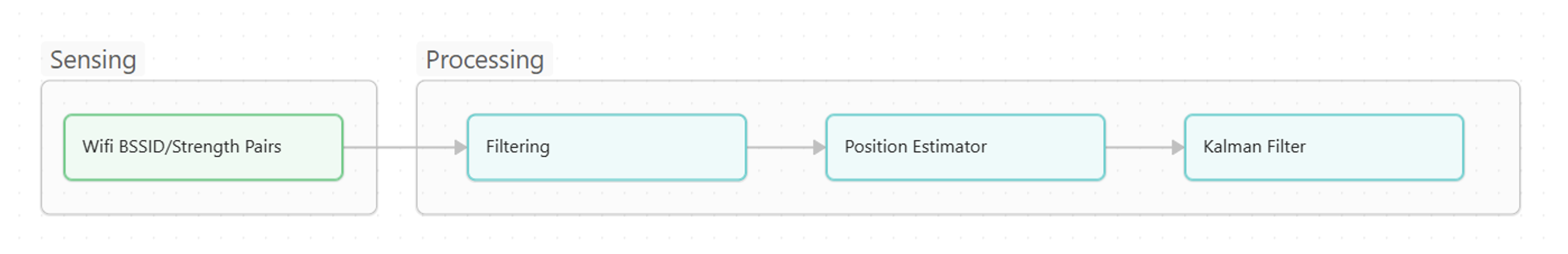

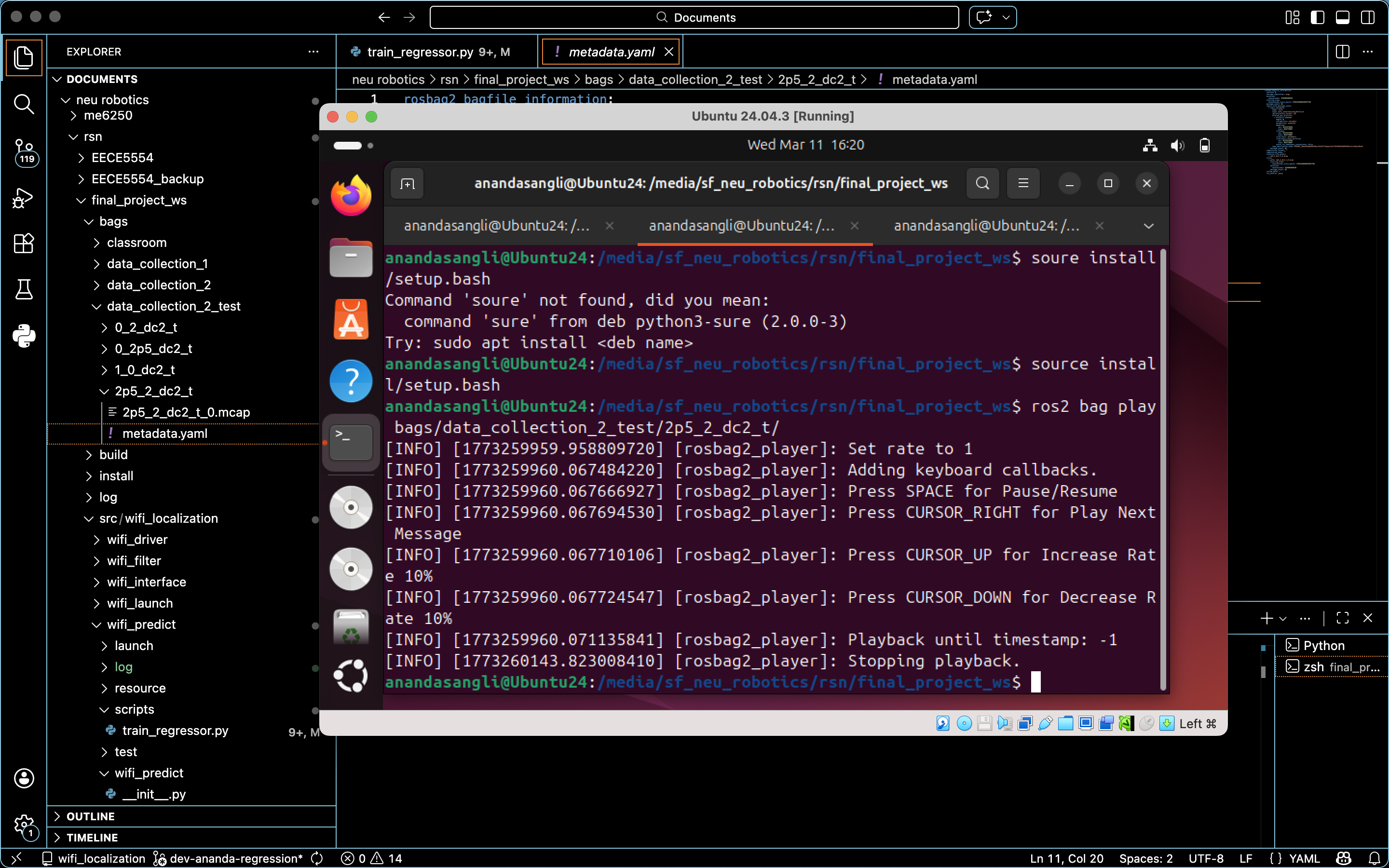

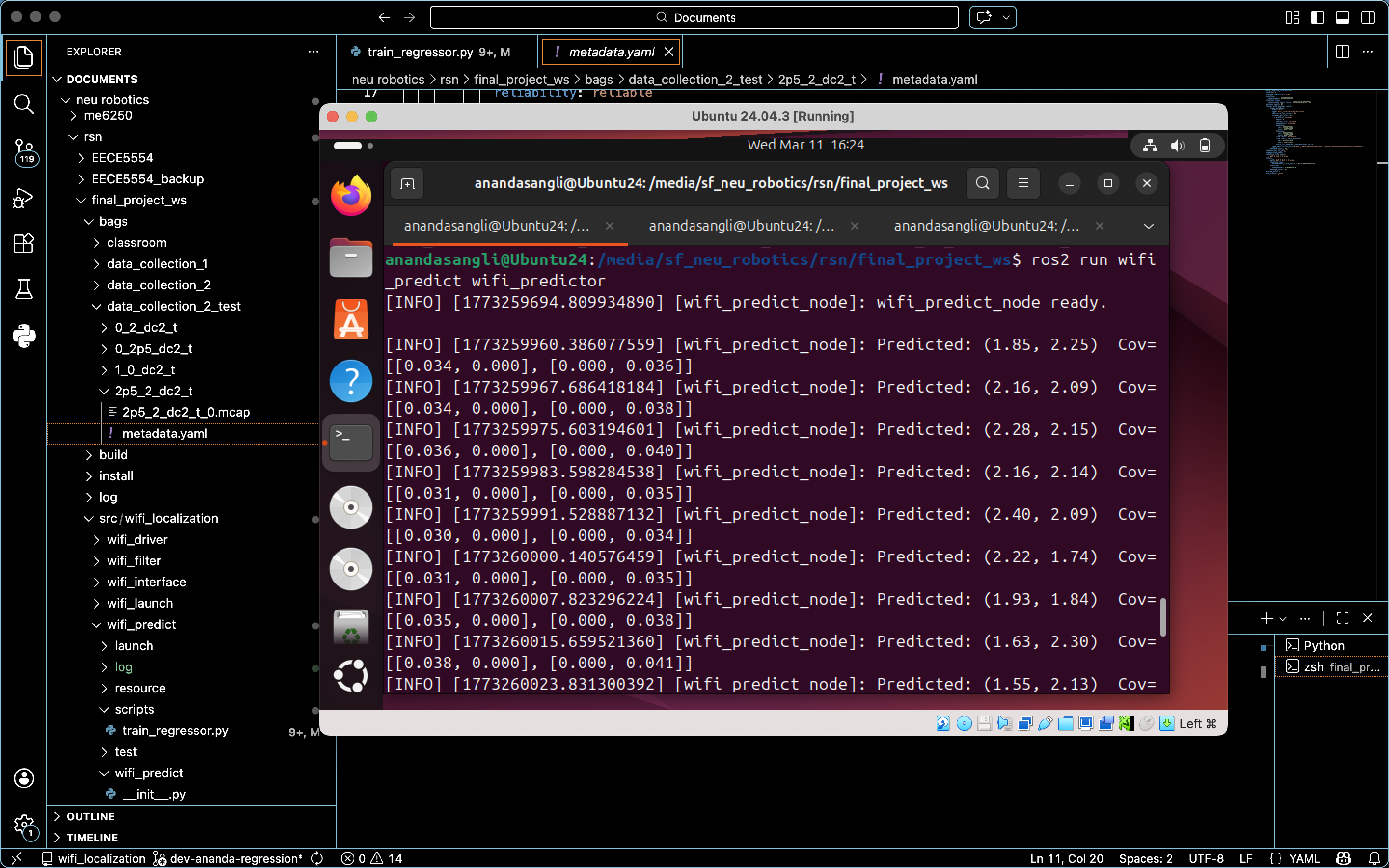

- Implementing these models as a ROS2 prediction node that integrates with the system-wide sensing and filtering pipeline

- Performing data analysis and quantitative evaluation to assess localization accuracy and system efficacy

My other 2 team members, Jack and Ben, focused on complementary system components like the Sensing and Filtering aspects of the pipeline. This included the ROS2 Wi-Fi driver, custom message definitions, low-pass filtering of raw RSSI measurements, and Kalman filtering for temporal smoothing. My development of the core inference layer of the localization system is wrapped in a ROS2 Python Node with a regression training Python script.

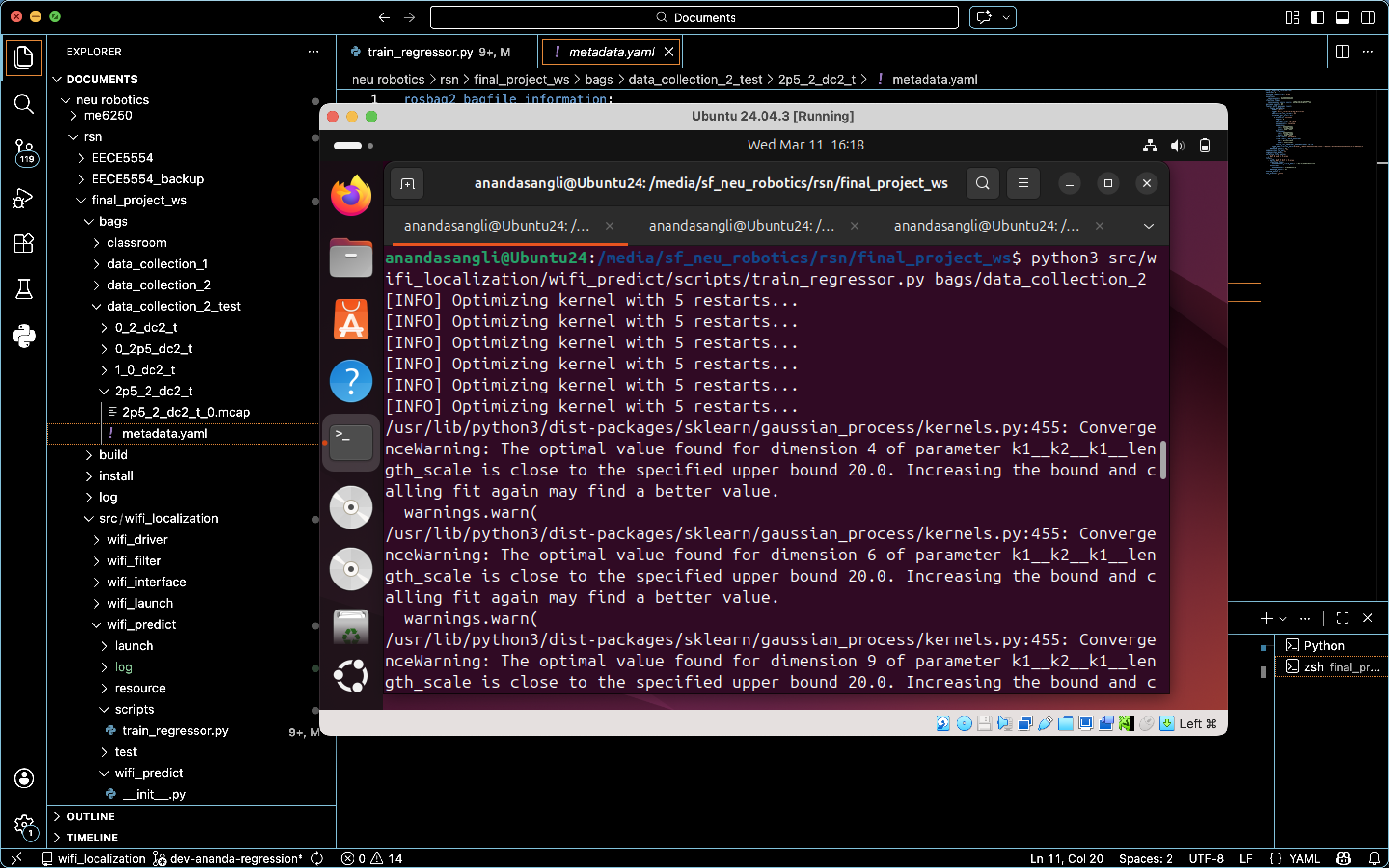

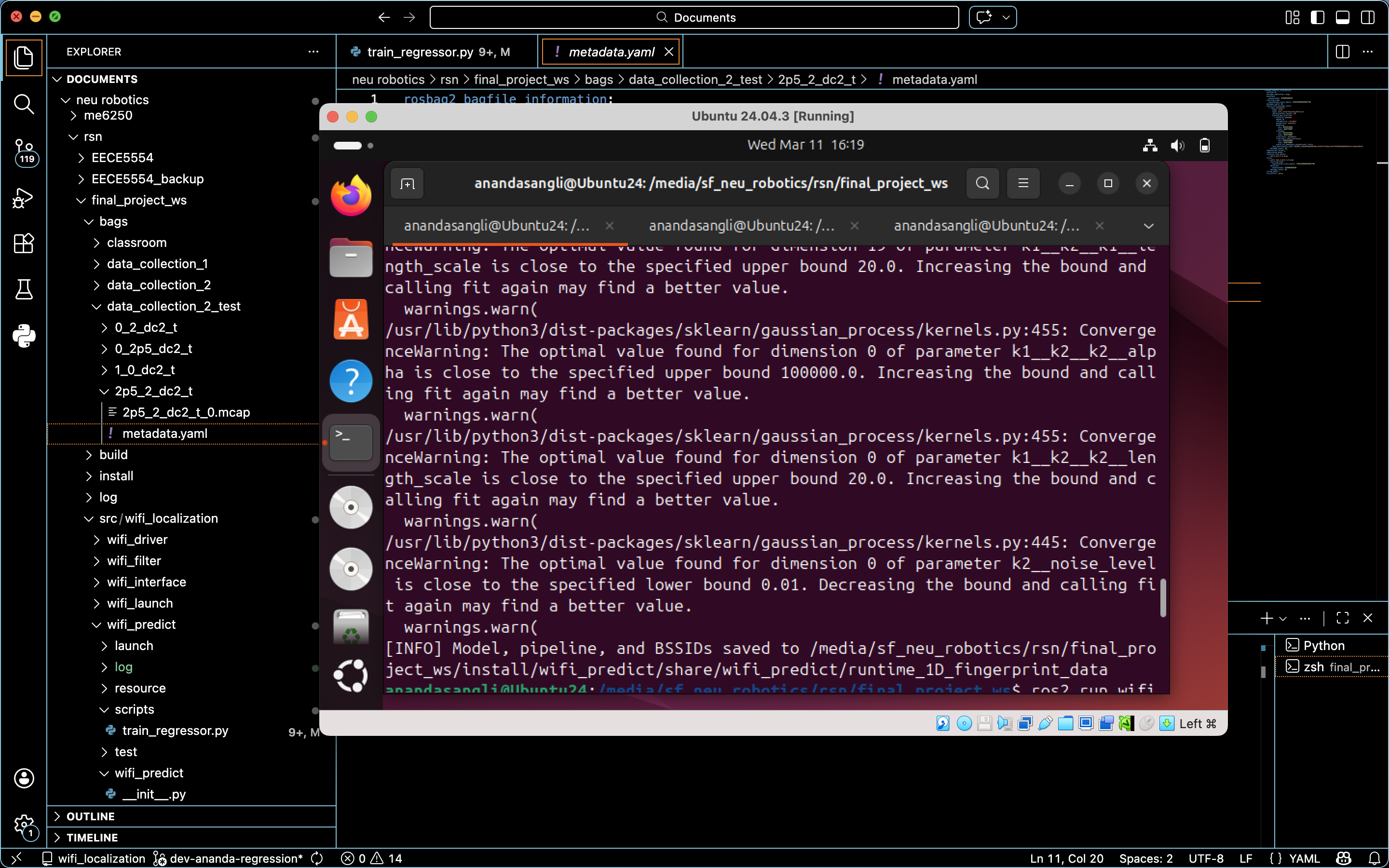

I followed a process of analysing the data, experimenting with various models, and producing the necessary results for filtering.

Sensing Modality Data Challenges:

- High dimensionality & sparsity: Only a subset of all the access points for each Wi-Fi scan

- Measurement noise & temporal variability

- Nonlinear spatial relationships Despite these challenges, Wi-Fi was chosen for its infrastructure-free and low-cost sensing capabilities.

Data Representation + Preprocessing

Wi-Fi scans recorded consisted of variable-length access point observations, which are incompatible with standard regression models. To address this, I used a fixed-dimensional representation indexed by BSSID. I used mean imputation to handle the missing RSSI values. As a result, the consistently observed access points were incorporated into the model. I also implemented a variance-based feature selection to remove access points with low informational content.

Candidate Modeling Approaches

I implemented 3 approaches, each with increasing complexity.

- Lazy learning or fingerprinting: Evaluated as an initial baseline due to their simplicity but they scaled poorly with dataset size and did not provide reasonable estimates. Downstream probabilistic filtering was probably necessary to address accuracy concerns in the training dataset.

- Gaussian Process Regression (GPR): Trained a single multi-output GP and observed its ability to model nonlinear relationships while quantifying predictive uncertainty.

- Multi-output regression formulations using GPR: Two independent GPs, one for each spatial dimension (x, y), which yielded the highest predictive accuracy.

Results

Gaussian Process Regression, when combined with structural preprocessing and careful kernel design, was shown to be well-suited for Wi-Fi-based indoor localization. We successfully achieved 2-Dimensional localization with sufficiently accurate position estimates.

Please note that GenAI was used to debug and help develop this regression, as this project was my first introduction to Machine Learning. I used it as an opportunity to learn as much as I could while keeping up with the rapid development timeline.

]]>